You might be feeling overwhelmed with all the AWS compute options to choose from.

AWS keeps on coming out with more and more Compute services, and it can be difficult

to figure out which option is best for your use case.

In this video, we’re going to discuss 7 of the most popular compute services and how they compare against five categories:

- Setup

- Reliability

- Cost

- Maintenance

- Abstraction

So let’s get into it.

Prefer video content? Check out my YouTube video on the topic below.

Table of Contents

EC2

EC2 stands for elastic compute cloud. Its one of the oldest services launched by AWS. The service offers you the ability to rent virtual servers with a broad selection of power configurations. Some are tiny micro instances and cost a few pennies per hour, while others are super powerful and can cost hundreds per day.

Abstraction

In terms of abstraction, EC2 scores poorly. This is because EC2 is a low level building block that you can use however you please. This can be a good or a bad thing depending on your use case. Its great

if you require fine grained control over the hardware. If not, the additional setup and configuration you’ll need to do may feel overwhelming.

Setup

For setup, EC2 scores poorly here as well. Setup requires deciding which instance type is appropriate for your workload, and learning a bunch of other concepts beyond just EC2. Sure you can get away with launching a basic instance using an express configuration, but any non-trivial use case requires additional learnings.

Reliability

For reliability, EC2 scores Very Good. Instances are auto-replaced if there is ever a hardware problem. Instances can also be predictably commissioned in advanced or provisioned on demand. Overall an extremely reliable service that has consistent uptime.

Cost

For Cost, EC2 scores Very Good. This is thanks to having flexibility on the instance types you provision that can help you select just the right amount of resources for your workload. You can also take advantage of purchasing reserved instances which require 1-3 year upfront commitments, but can provide some significant savings in the long run.

Maintenance

Finally for EC2 is Maintenance where it scores Poor. Renting your own virtual machines means you have to worry about things like operating system patching, driver updates, or other infrastructure patches. Some of these items can be automated but you as the user are responsible for ensuring your ec2 machines are up to date.

In summary, EC2 is a low level service that may be a good option for you if you need direct control over your hardware. But what if we want a little bit more abstraction of our infrastructure using a orchestration framework? For that we can use ECS – our next service for discussion.

ECS

ECS stands for Elastic Container Service. It is a highly scalable container management system that launches and maintains your containerized applications. You can use it to run ad-hoc compute tasks, or leverage the service mode to run things like a webapp over a fleet of containers. There are two main configuration modes for ECS – one involves leveraging EC2 machines to host your containers. The other mode is a serverless configuration – more on that later.

Abstraction

The default launch type for ECS involves running your containers as “tasks” on EC2 machines. Task health is monitored and maintained using an ECS side agent installed on those EC2 machines. This configuration is a bit better than using EC2 on its own since we get the benefits of using containers. However, we still need to worry about the underlying EC2 instance and things like networking between our instances and the containers themselves. Because of this, I give ECS a satisfactory score for abstraction.

Setup

Setup can be a bit daunting for ECS. You need to configure your container and vpc network to work together properly, and in addition need to define resource requirements for each of your container instances. If you’re using the more advanced features like load balancing or blue green deployment, there can be a significant learning cure. Overall, I give it a satisfactory.

Reliability

ECS scores very good for reliability. This is because you can rely on the ECS Container Agent to maintain the health of your cluster including the placement of your tasks on machines. The machines themselves are EC2 machines which will automatically replace themselves in case of failure.

Cost

For Cost, you only pay for the underlying EC2 instances and nothing extra. The only slightly minor additional cost is container image storage which is typically very low.

Maintenance

For Maintenance, we have similar concerns to those we raised with EC2. Mainly around infrastructure maintenance including things like software vulnerabilities or security patches. Our scoring is consistent with EC2.

This past section have been about leveraging EC2 machines to host your software. But what if we want to rely on AWS to manage the infrastructure for us? This is where Fargate comes in.

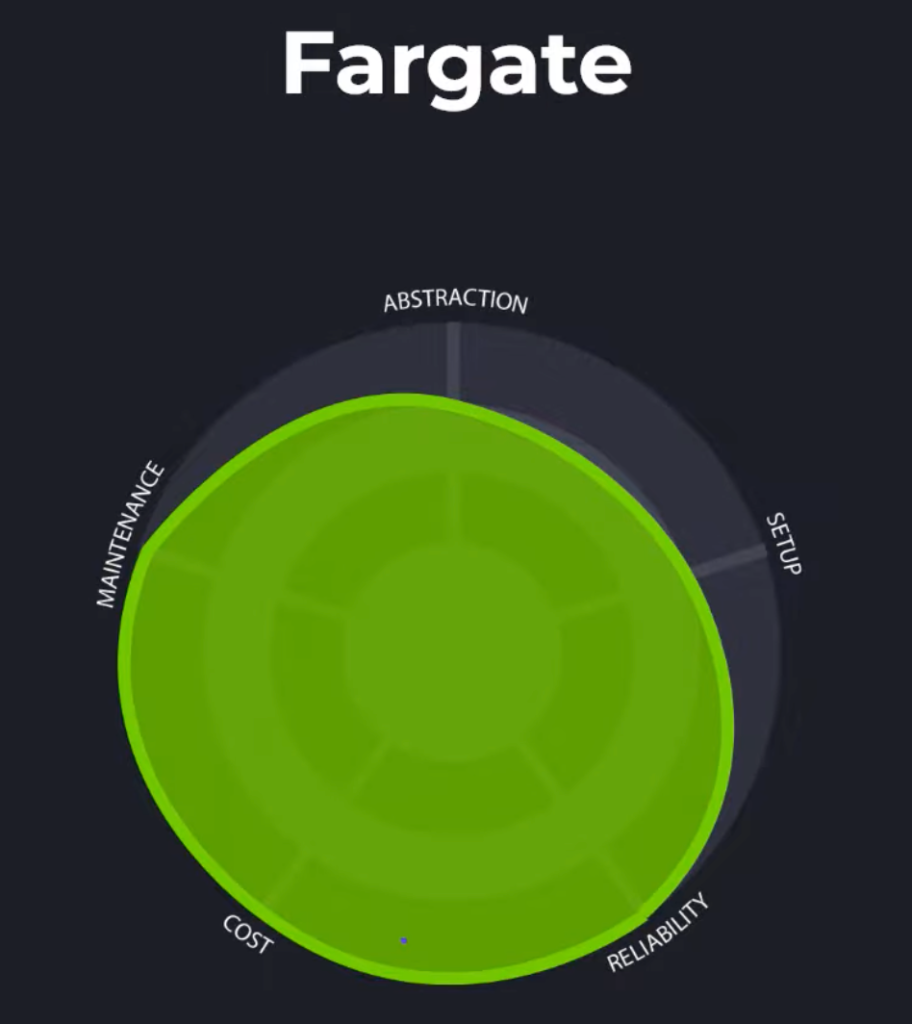

Fargate

Fargate is the name of an alternative launch option for ECS. It involves using a completely “serverless” configuration where AWS worries about managing the underlying ec2 instances on your behalf. This makes it so you can focus more on your application development and less on infrastructure setup and management.

Abstraction

For abstractionn, Fargate is a step above EC2 and vanilla ECS. The serverless run mode means we don’t think about machines anymore, and instead just focus on our containers and Tasks that they run in. We give Fargate a Good for abstraction.

Setup

Setup is better as well. Not worrying about infrastructure means theres one big chunk of the setup process that we can easily avoid.

Reliability

In terms of reliability, Fargate worries about the tasks that you run and the underlying infrastructure

that they run on. Its the ultimate form of reliabiltiy where you worry about very little beyond your application. Top marks here.

Cost

Cost is based on the amount of resources you provision for your tasks. The more virtual cpus and memory you provision on your task, the more you pay. Another cost factor is storage if you require more than 20gb. You can save on cost as well by over 70% if you workloads take advantage of spot pricing. This offers a better price point, but AWS may interrupt your worklaods at any time – only appropriate in some circumstances.

Maintenance

Similar to to setup, there’s almost no maintenance for fargate configurations beyond software deployments. Top Points here.

So far we’ve been steadily increasing in terms of abstraction. The pinnacle of infrastructure abstraction is AWS Lambda – our next compute option.

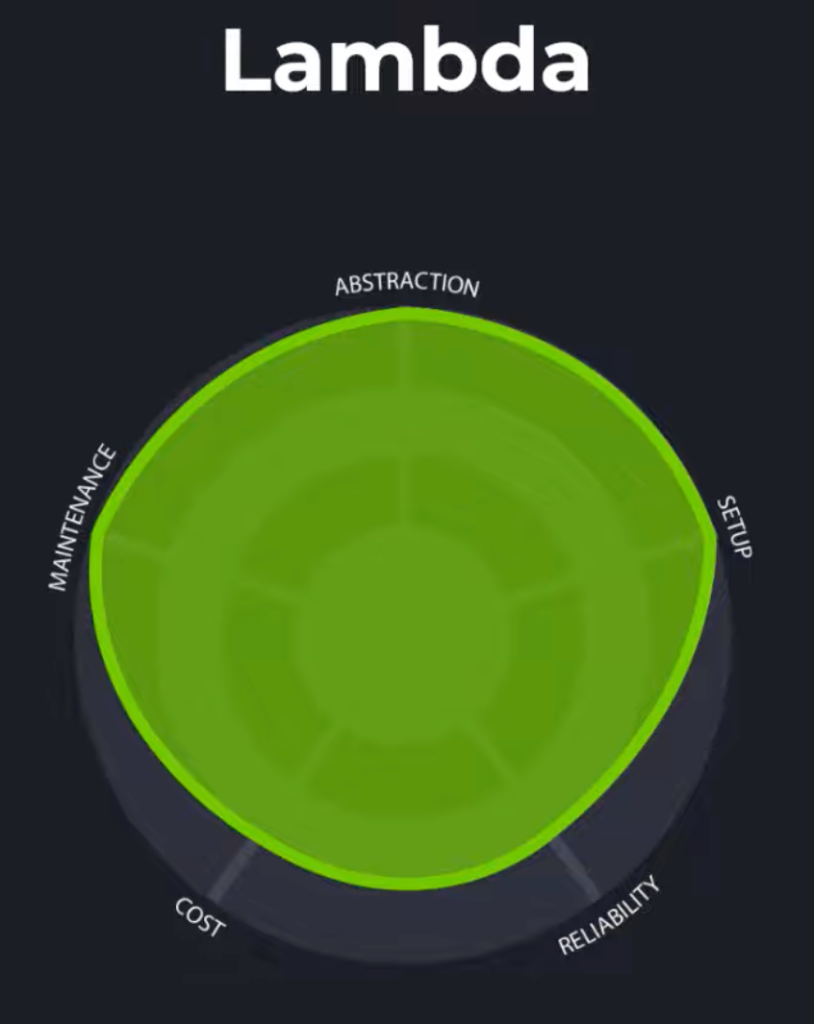

Lambda

Lambda is a completely serverless compute option that goes one step further than fargate. In Lambda, you dont worry about infrastructure at all and just worry about code. Lambda handles all the scaling behind the scenes. Another great asset for Lambda is that it easily integrates with other AWS services such as API Gateway, SQS, SNS, Step Functions, S3, and more. It’s a very powerful and popular service. You can quickly build microservices or backends for web apps.

Abstraction

As you might imagine, Lambda gets top points for abstraction. The fact that there are no servers to manage and you’re only worrying about code makes it easy to understand and use Lambda.

Setup

For setup, minimal effort is required. You simply upload your code and lambda takes care of the rest. Additionally, you configure the memory setting of your function. When raising your memory setting,

you’ll also get access to more virtual cpus to speed up your lambda invocation. This can help if you have heavy workloads that require access to more resources.

Reliability

For reliability. Lambda is almost top scoring, but suffers from a phenomenon called Cold Start. Cold start occurs because Lambda needs to launch containers in response to invocations of your function. This only happens in the initial invocations and not subsequent ones. The result is higher latency for these initial requests.

This phenomenon makes API hosting with Lambdas not always the wisest choice, especially for API sensitive applications woth consistent latency requirements. Because of this, Lambda scores Good.

Cost

In terms of cost, Lambda allows you to be very efficient. You’re billed based on the number of times you invoke your function, the duration of each invocation, and the amount of memory you configure for that invocation. However, Lambda isn’t the greatest at long running jobs – for that you’ll want to use something like AWS Fargate

Maintenance

Top points here, theres nothing to maintain for AWS Lambda. All the infrastructure is fully managed by AWS.

Lets switch gears now to something a little bit more easy to work with that allows you to get started really quickly with common application setups. I’m talking about Amazon Lightsail.

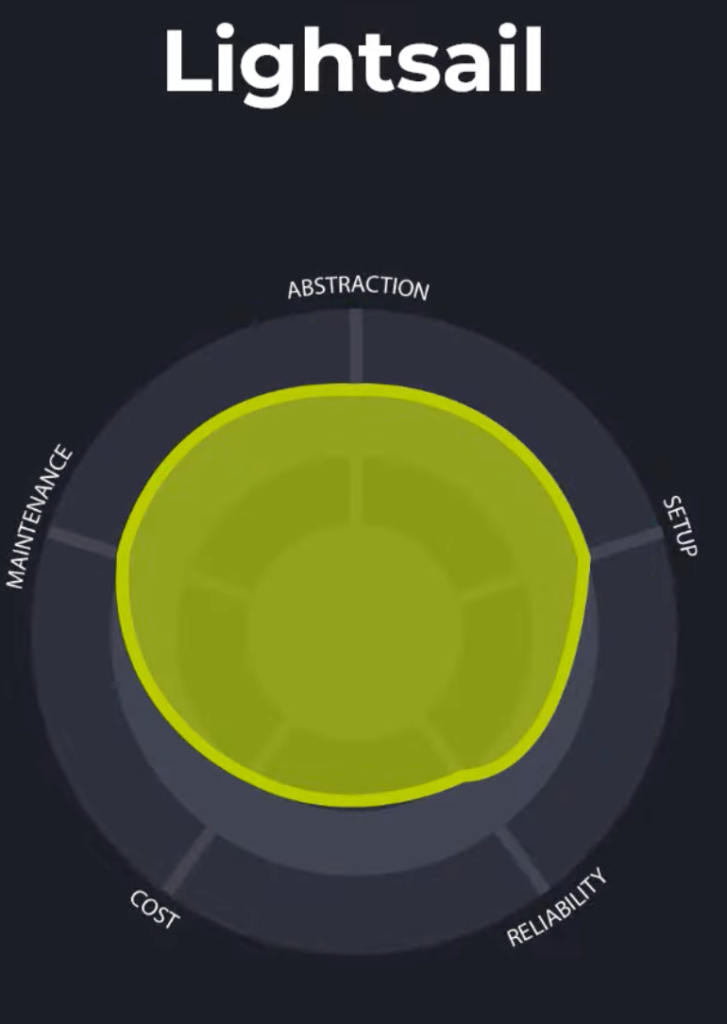

Lightsail

Abstraction

Lightsail is a service that operates at medium a medium level of abstraction. it lets you follow a guided experience to launch web applications, websites, wordpress blogs, and other common application configuratiosn in just a few clicks. You can also add multiple instances with Load Balancing if you need to achieve scale. Overall though, lightsail is an attempt to make making and managing your compute a lot more simpler. If you’re not happy with the abstraction level of lightsail and looking for more control, you can easily upgrade your configuration to EC2 with just a few clicks.

Setup

Setup for Lightsail is pretty minimal and straight forward. You simply select from the available configurations such as LAMP, MEAN, WordPress, and Lightsail handles the rest. You may however need to be familiar with load balancing concepts, DNS setups, and CDNs if your application requires it.

Reliability

A slight gripe with lightsail is that it has a concept of burst capacity. This means that if your instance’s CPU utilization goes beyond a certain threshold for a sustained period of time, it will consume all of its

burst capacity and be forced to operate at a throttled CPU %. You can mitigatethis by using a more powerful instance, but it can cause some inconsistent performance during periods of sustained load.

Cost

Lightsail scores slightly lower for cost as well. Lightsail is essentially a wrapper ontop of other AWS services. You pay an additional price though for the cost of convenience. The pricing model allows you to pick from pre-set configurations. You can pay as low as $3.50 a month for a small machine or up to $160 a month for a monster.

Maintenance

Lightsail scores pretty well for maintenace. The only issue I have with it is that concern with burst capacity. You do need to monitor your metrics to ensure your instance isn’t exceeding its burst capacity too often – doign so can seriously impact performance.

Lightsail offers a simplified experience for launching pre-configured apps. With it, you don’t have visibility into the underlying infrastructure. Our next option is similar in that it lets you launch and deploy common apps, except this time you maintain control over all underlying infrastructure components. We’re talking about AWS Elastic Beanstalk.

Elastic Beanstalk

Elastic Beanstalk is an orchestration service that promotes ease of use for deploying and scaling web applications and backend services. You simply upload your code or docker container and Elastic beanstalk handles provisioning, deployments, monitoring, and scaling.

Abstraction

For abstraction, Beanstalk scores satisfactory. But this isn’t necessarily a bad thing. After all, if you’re using elastic beanstalk, it probably means that you want the control over the infrastructure. So take from this what you will.

Setup

Setup is a breeze with elastic beanstalk. You can link your source code through a git repository, through your IDE, or upload it directly. Beanstalk automatically sets up the EC2 instances, launches your application, monitors its health, and scales it when needed. Its really a breeze. Top marks here.

Reliability

Since beanstalk operates ontop of EC2, its reliability similarly scores top marks. Additionally, automatic scaling makes your application capable of handling very large workloads.

Cost

There are no additional charges for using elastic beanstalk. You simply pay for the resources that the server launches and manages for you. No cost premium here.

Maintenance

Elastic beanstalk regularly schedules platform updates to provide fixes, software updates, and new features. A large majority of the work is handled by elastic beanstalk automatically, but ultimately you still need to keep an eye on the underlying hardware.

Overall, elastic beanstalk lets you create and deploy scalable applications while still having control over the underlying infrastructure. But what if we want the same functionality WITHOUT having to worry about infrastructure at all? Well that’s the perfect use case for App Runner

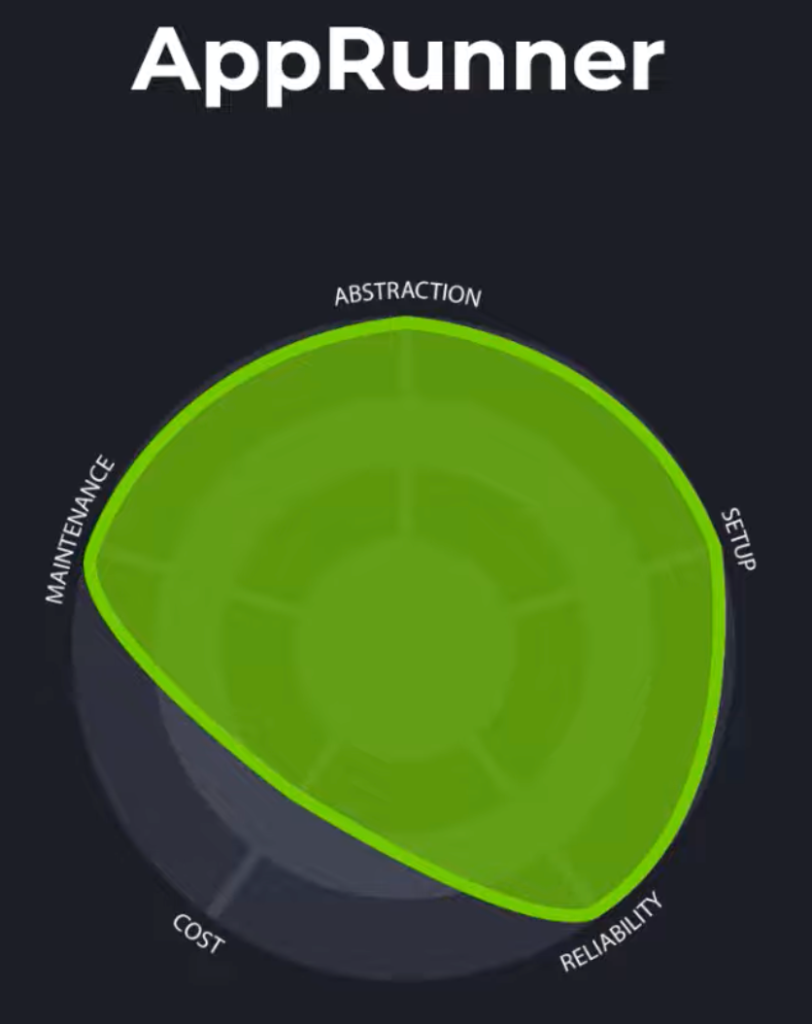

App Runner

AppRunner is a relatively new AWS service that is meant to let developers quickly deploy containerized web apps and APIs without having to worry about infrastructure. It does everything elastic beanstalk does, but abstracts away the infrastructure for you. The service is fully mananaged, but only lets you deploy containerized applications.

Abstraction

For abstraction, App Runner scores top marks. Infrastructure including compute, load balancing, container orchestration, security, and networking are all handled for you. There’s no visibility into the underlying infrastructure – its all managed on your behalf by AWS.

Setup

Setup is quite minimal – it requires you to select the amount of memory, vCPUs, and concurrency you require for your application. Beyond that, AppRunner worries about the rest.

Reliability

Reliability scores top marks. Despite being serverless like AWS Lambda, AppRunner does not suffer from the Cold Start phenomenon. This is because app runner maintains provisioned containers that are capable of responding instantly to traffic. A great choice for APIs or web apps requiring consistent performance.

Cost

Cost scores slightly lower fro app runner due to the provisioned containers it needs to manage. Other than that you pay for the number of container instances you require and power configurations.

Maintenance

Finally for maintenance, app runner handles everything for you – no infrastructure patches or os updates or anything like that. Top marks here.

Summary

I hope this article has helped understand in broad strokes the variety of compute options across AWS. As you can tell, there’s many pros and cons to consider as you decide on which technology is right for your project. If you’re still trying to decide, check out these more in depth comparison articles below.